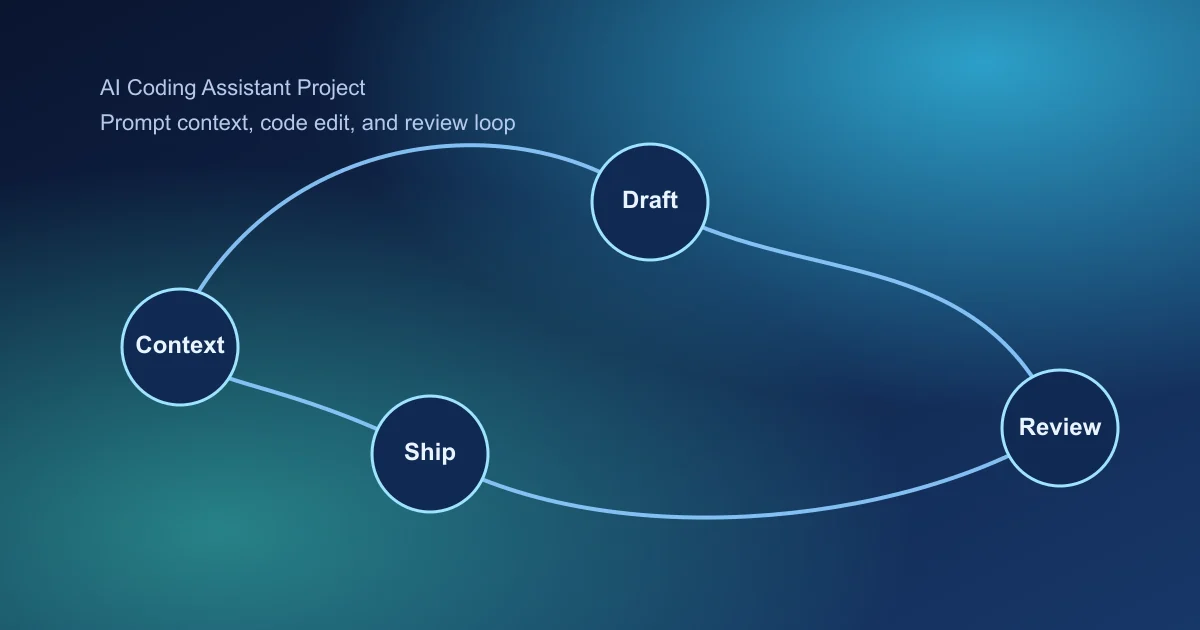

AI coding assistant projects are growing fast, but many fail due to weak repository context and poor quality gates. This guide helps you build a practical, production-safe assistant workflow.

Why Most Coding Assistants Stall After Demo Stage

Initial demos often look strong because tasks are simple and isolated. Real repositories add architecture constraints, test debt, and team review standards that generic generation cannot handle.

Sustainable value comes from combining context retrieval with strict quality gates, not from bigger prompts alone.

Key Takeaways

- Start with one high-frequency developer workflow.

- Use context retrieval from repository structure and history.

- Enforce test and policy gates before merge suggestions.

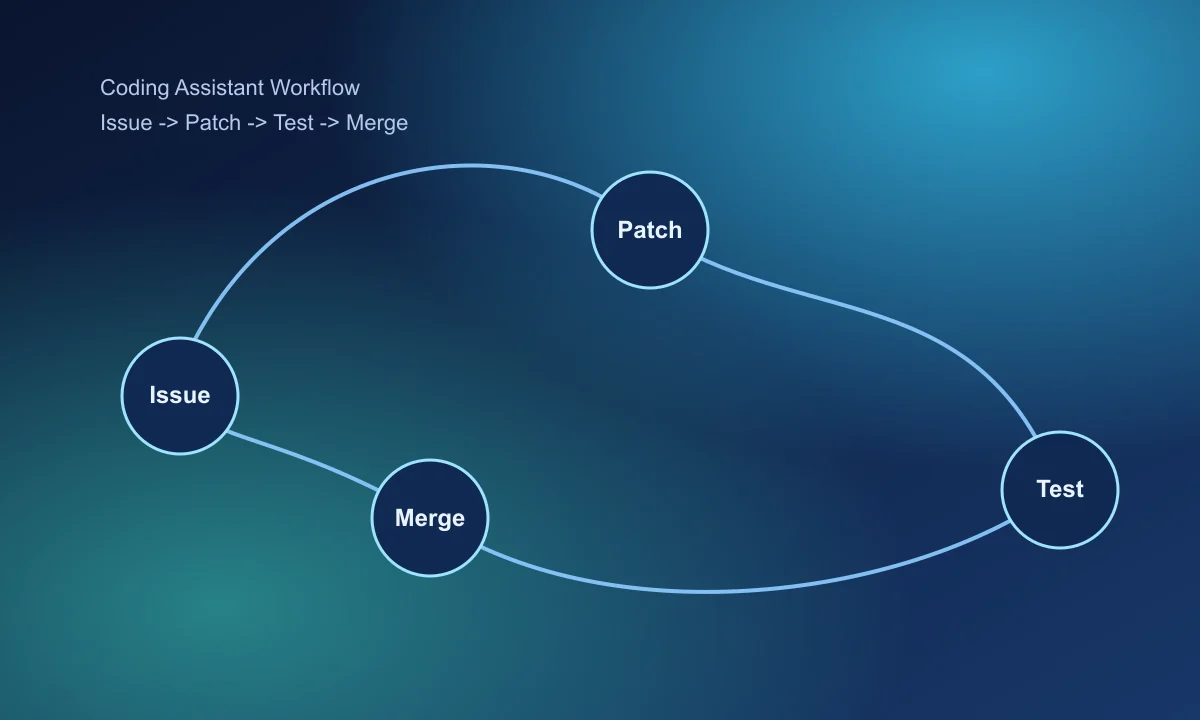

1. Define a Narrow Developer Workflow

Good starting workflows:

- Issue summary -> patch draft

- Failing test -> likely fix candidates

- Refactor request -> scoped code transformation

2. Build Repository-Aware Context Retrieval

- File relevance by module and ownership

- Recent commit and PR context for changed behavior

- Test and lint config visibility

- Coding standard and security policy context

3. Structure Patch Generation Flow

- Interpret issue scope and constraints.

- Propose minimal patch set and reasoning.

- Generate test updates where needed.

- Output review notes and rollback considerations.

4. Add Mandatory Quality Gates

- Static analysis and lint pass requirements

- Unit/integration test pass checks

- Security and dependency policy scans

- Human reviewer approval before merge

5. Measure Assistant Impact Correctly

- Accepted patch rate by issue type

- Time-to-first-draft and time-to-merge

- Post-merge defect rate comparison

- Developer satisfaction and trust signals

6. Roll Out by Team and Use Case

Start with one team and one workflow. Expand after stable quality signals across multiple sprint cycles.

Final takeaway

AI coding assistants should optimize merge confidence, not just code generation speed. Strong context and strict quality gates are the core differentiators.

Continue with AI agent project guide and AI project ideas.

Frequently Asked Questions

What should an AI coding assistant do in v1?

Start with one workflow such as issue-to-patch draft generation with repository-aware context and test execution hints.

How do I reduce unsafe code suggestions?

Use context boundaries, policy checks, and required test/lint gates before accepting generated patches.

What metric matters most for coding assistant value?

Track accepted patch rate with successful test pass outcomes and time-to-merge improvements.