AI Competitor Monitoring Automation is most effective when applied to one real workflow owned by one accountable team. Use this page as a working playbook: scope tightly, instrument clearly, and improve every week.

Key Takeaways

- Define one high-impact workflow with one accountable owner.

- Instrument baseline and post-release metrics from day one.

- Run one weekly improvement cycle tied to one KPI.

1. Define scope before tools

Avoid broad rollout. Lock the user segment, trigger event, and business outcome first. This keeps implementation focused and measurable.

2. Design the end-to-end workflow

Map input, decision, review, and action stages. Include fallback and escalation so quality stays stable in production.

- Input capture and context validation

- AI processing stage with clear constraints

- Review and approval checkpoints

- Execution and operational logging

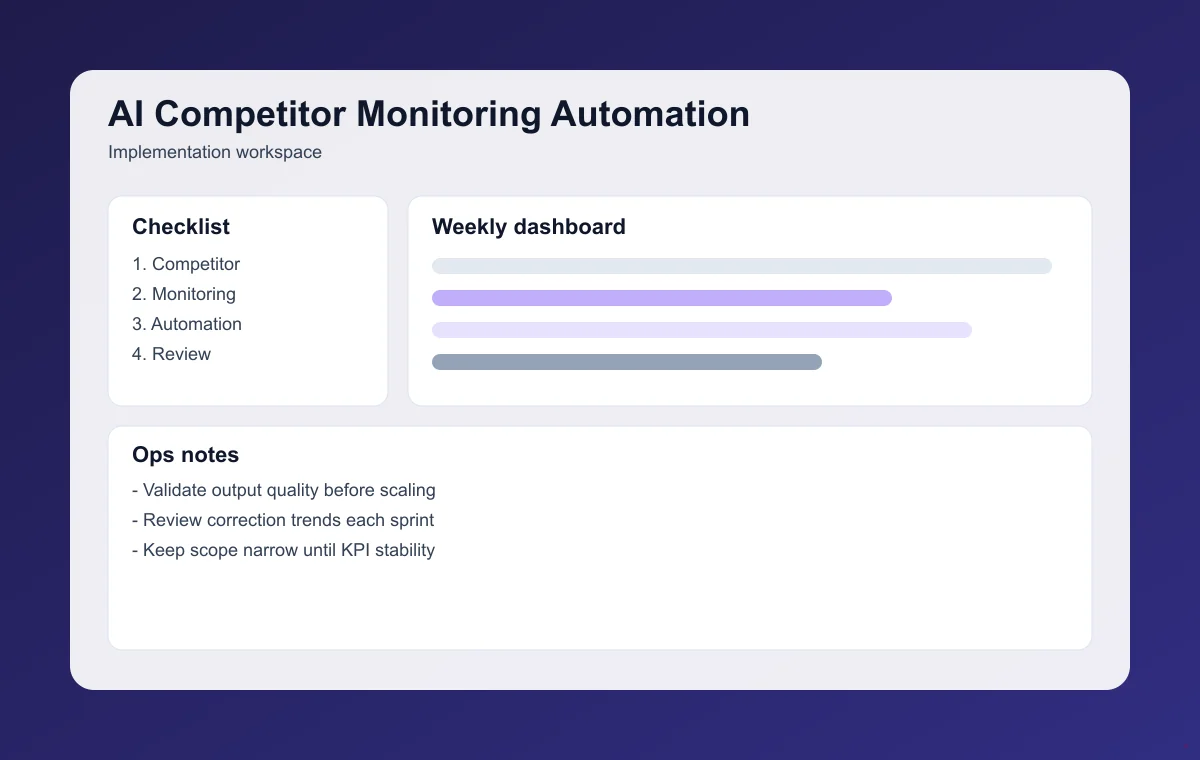

3. Instrument metrics from day one

Track time-to-value, quality pass rate, correction frequency, and downstream business impact. These metrics improve prioritization quality.

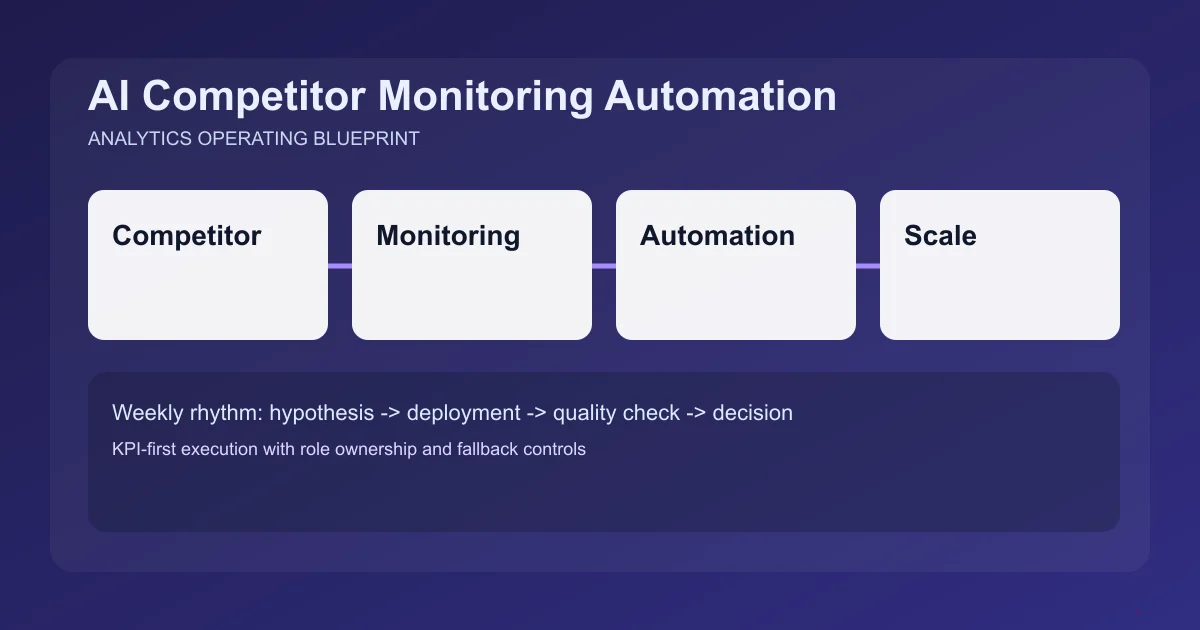

4. Run a weekly execution loop

- Prioritize one improvement linked to one KPI.

- Ship with quality gates and fallback behavior.

- Review failures and wins with evidence.

- Decide what to scale, revise, or stop.

5. Avoid common implementation mistakes

- Expanding scope before stable KPI movement

- No owner for weekly optimization cadence

- No fallback path for low-confidence outputs

- Tracking vanity metrics over value metrics

Final takeaway

AI Competitor Monitoring Automation produces durable results when teams keep scope narrow, measure rigorously, and improve continuously.

For deeper implementation, continue with AI Pricing Experimentation System Guide and AI Customer Support Knowledge Assistant Guide. Then use the full article library to plan your next market intelligence sprint.

Frequently Asked Questions

Where should teams start with AI Competitor Monitoring Automation?

Start with one narrow workflow and one KPI owner. Ship a small v1 and improve weekly based on measurable outcomes.

How quickly can this show impact?

Most teams see directional signal in 1-2 weeks when instrumentation and review cadence are in place.

What should we track first?

Track time-to-value, quality pass rate, and correction volume before scaling scope.