AI Prompt Engineering for Products is most useful when applied to one real team process, not as a broad transformation project. Use this guide as a practical working document: pick one process, instrument it, and improve it week by week.

Key Takeaways

- Anchor execution around one narrow, high-impact workflow.

- Ship weekly improvements tied to observable KPI movement.

- Optimize for output quality and user trust before adding adjacent features.

1. Define scope before tools

Most failures come from over-scoped first releases. Define one user segment, one workflow trigger, and one measurable outcome before selecting tools.

2. Design the end-to-end workflow

Map the full workflow from input to decision to action. Include review, fallback, and escalation paths so quality remains stable under real load.

- Input and context collection

- AI generation or decision stage

- Human review and approval

- Action execution and logging

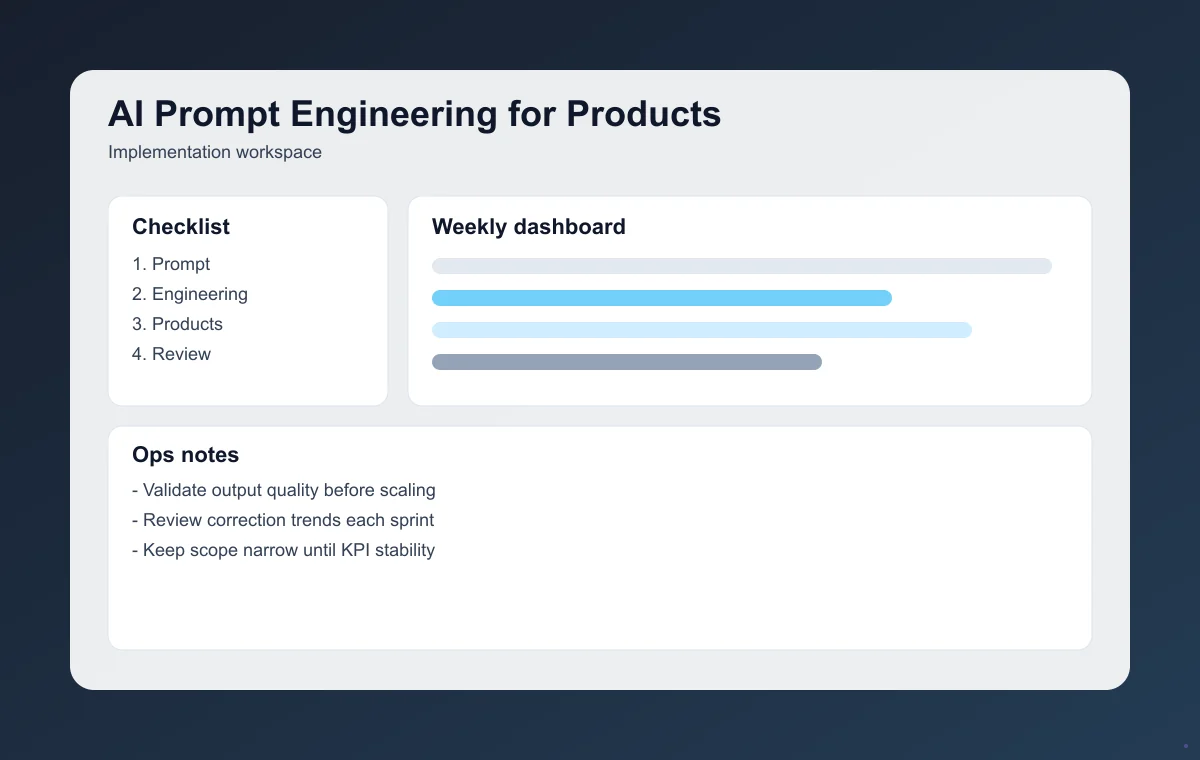

3. Instrument metrics from day one

Track baseline vs. post-launch performance weekly: time-to-value, completion quality, correction rate, and business impact on output quality and user trust.

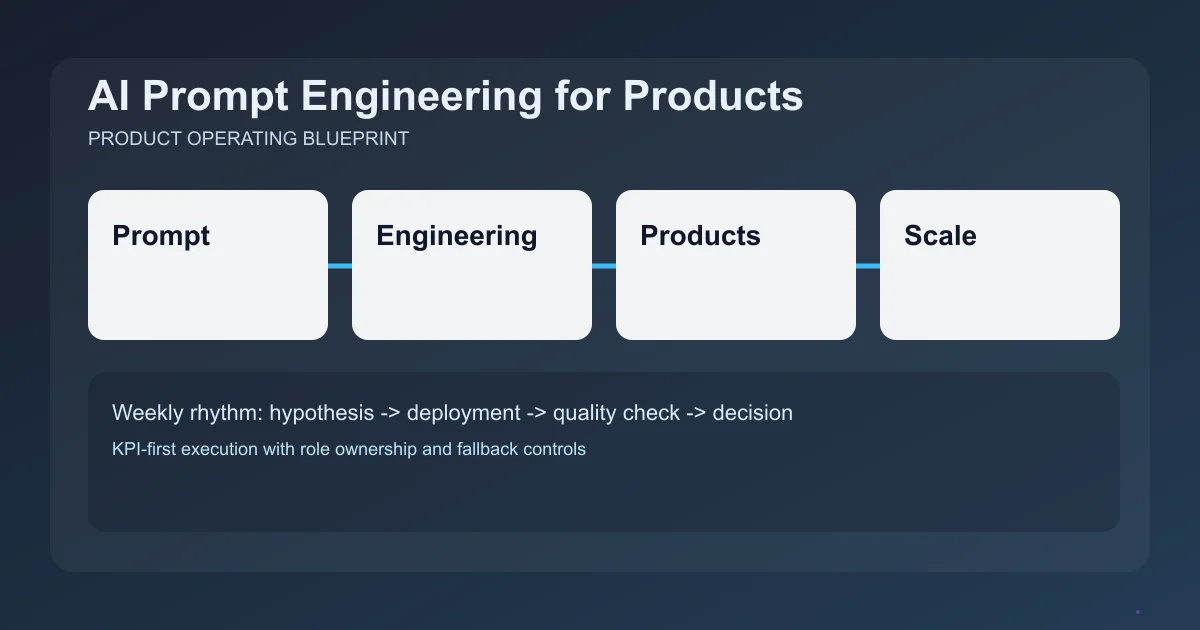

4. Run a weekly execution loop

- Prioritize one change with clear KPI ownership.

- Ship the change with fallback and QA checkpoints.

- Review metric shift and failure patterns after deployment.

- Decide keep, revise, or roll back in weekly ops review.

5. Avoid common implementation mistakes

- Broad scope before baseline metrics exist

- No clear owner for weekly optimization

- Missing fallback path for low-confidence outputs

- Scaling channels before quality stabilizes

Final takeaway

AI Prompt Engineering for Products drives results when teams treat it as a product operating system: focused scope, clear metrics, and disciplined weekly iteration.

For deeper implementation, continue with AI RAG Knowledge Base Build Guide and AI Meeting Notes Automation System. Then use the full article library to plan your next execution sprint.

Frequently Asked Questions

What is the first milestone for AI Prompt Engineering for Products?

Define one measurable use case and ship a minimum reliable workflow in week one. The goal is to create early signal around output quality and user trust.

How often should the team review performance?

Run a weekly review with KPI deltas, failure examples, and one prioritized change for the next cycle.

When should we expand scope?

Expand only after at least two stable review cycles with sustained quality and measurable impact.