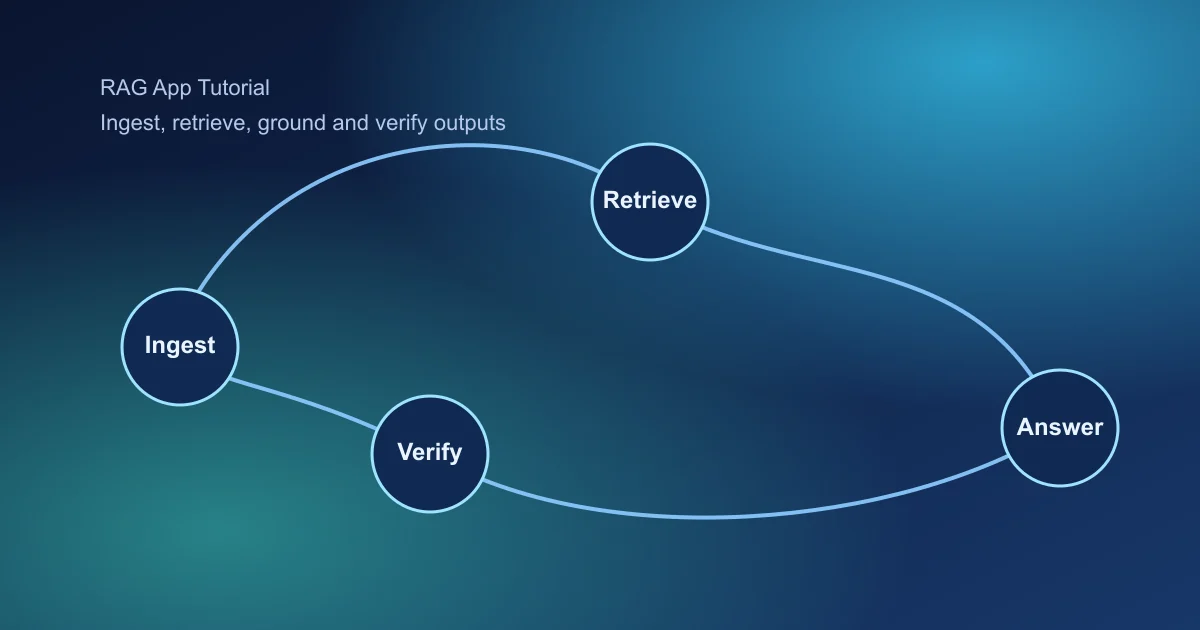

RAG tutorials are popular because teams need accurate AI answers on proprietary knowledge. This guide focuses on practical implementation choices that improve trust and reduce hallucination risk.

When RAG Is the Right Choice

RAG is most effective when your product must answer from changing private data such as policies, docs, and internal procedures. Without retrieval grounding, even strong models drift or invent unsupported details.

Think of RAG as a system design problem: data quality, retrieval relevance, and response controls must all work together.

Key Takeaways

- Invest in source quality and metadata before retrieval tuning.

- Evaluate retrieval and generation separately for clarity.

- Ship with citation and fallback controls from day one.

1. Prepare High-Quality Source Data

- Normalize formats and remove duplicated fragments

- Chunk by semantic units instead of fixed-size only

- Attach metadata (product, date, owner, region)

- Version sources for change tracking

Weak source hygiene produces weak retrieval no matter which model you use.

2. Build Retrieval with Measurable Relevance

Start simple and measure retrieval quality before adding complexity.

- Baseline dense retrieval.

- Add hybrid retrieval for keyword-sensitive queries.

- Apply reranking for top-k precision improvements.

- Tune top-k and overlap based on evaluation queries.

3. Ground Prompts to Retrieved Context

- Require model to answer only from supplied context

- Return citations tied to source identifiers

- Use fallback response when context is insufficient

- Separate answer text from evidence payload

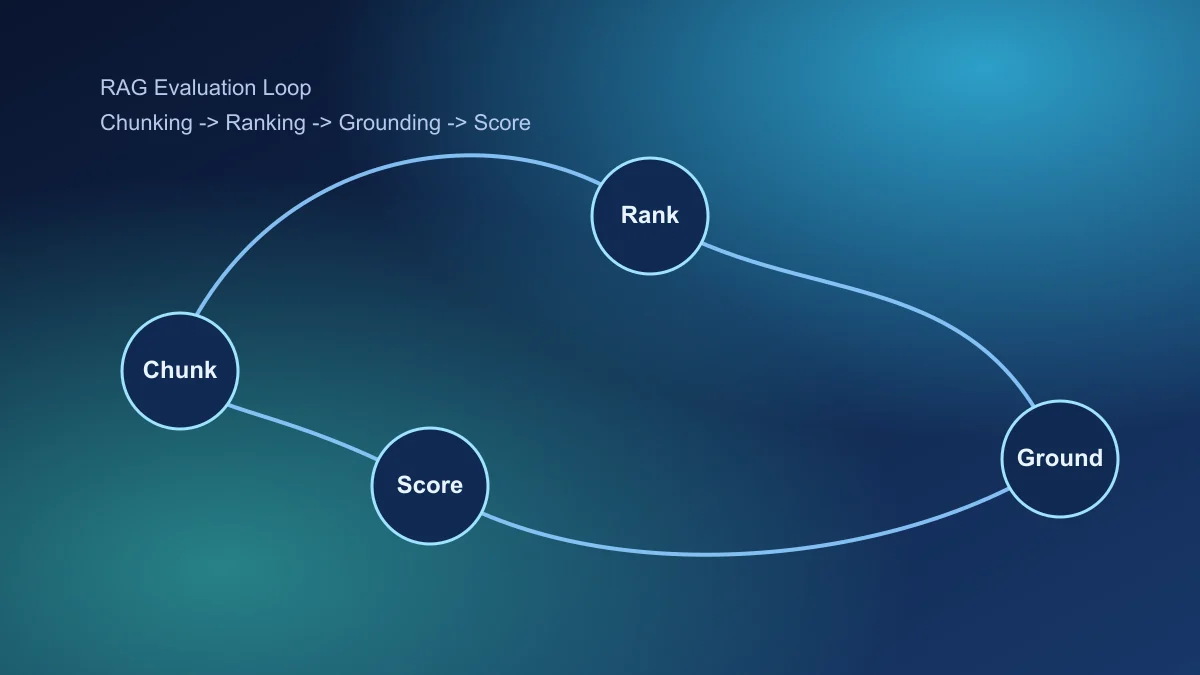

4. Evaluate RAG in Two Layers

- Retrieval layer: hit rate, ranking quality, evidence relevance

- Generation layer: grounded correctness, citation validity, abstention quality

Track failure categories so fixes are targeted and fast.

5. Add Monitoring and Drift Detection

- Unanswered query clusters

- Citation mismatch alerts

- Latency by query class

- Knowledge freshness lag

RAG quality decays without source and retrieval maintenance loops.

6. Launch with Confidence Boundaries

Restrict high-risk intents in v1, log all low-confidence outputs, and review weekly for policy and content updates.

Final takeaway

Production RAG is less about model hype and more about disciplined data, retrieval, and evaluation operations.

Continue with AI chatbot for business and AI agent project guide.

Frequently Asked Questions

What is the biggest reason RAG apps fail?

Most failures come from weak source quality and poor retrieval tuning, not from model choice.

How should I chunk documents for RAG?

Chunk by semantic boundaries with overlap, then validate retrieval hit quality by real user questions.

What metric matters most in RAG evaluation?

Grounded answer correctness with citation reliability is the most important metric for production trust.