User Feedback Analysis Pipeline is most useful when applied to one real team process, not as a broad transformation project. Use this guide as a practical working document: pick one process, instrument it, and improve it week by week.

Key Takeaways

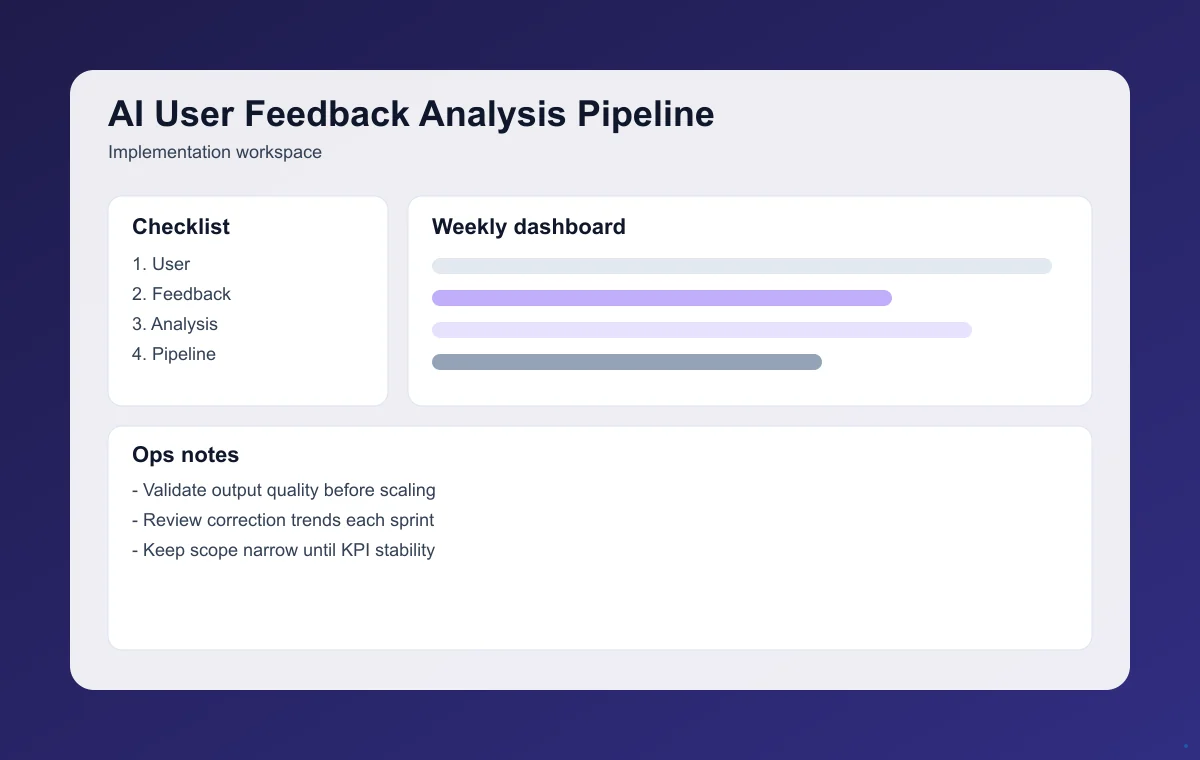

- Treat implementation as an operating system, not a one-off project.

- Make metric reviews a fixed weekly ritual.

- Prioritize changes by expected lift on signal quality and roadmap decisions.

1. Define scope before tools

Treat v1 as an evidence sprint. Pick one use case where outcomes can be verified weekly instead of launching broad, hard-to-measure automation.

2. Design the end-to-end workflow

Operational quality depends on explicit transitions. Document where humans approve, where automation runs, and where exceptions are routed.

- Input and context collection

- AI generation or decision stage

- Human review and approval

- Action execution and logging

3. Instrument metrics from day one

Use one KPI scorecard with leading and lagging indicators. This makes prioritization objective and keeps iteration focused on signal quality and roadmap decisions.

4. Run a weekly execution loop

- Select highest-leverage bottleneck from last week's data.

- Deploy one focused improvement with monitoring.

- Audit edge cases and correction workload.

- Feed learnings into next sprint planning and documentation.

5. Avoid common implementation mistakes

- Launching without KPI targets and review rhythm

- No QA checkpoint before automated actions

- Treating one week of gains as proof of stability

- Ignoring user correction patterns in prioritization

Final takeaway

User Feedback Analysis Pipeline drives results when teams treat it as a product operating system: focused scope, clear metrics, and disciplined weekly iteration.

For deeper implementation, continue with AI Growth Loop Design Playbook and AI Community-Led Growth Guide. Then use the full article library to plan your next execution sprint.

Choose Your Next Step

Use these stage-based reads to keep momentum and avoid jumping between unrelated tasks.

Startup Problem Fit With AI in 14 Days

Start with the highest-impact next step for this topic cluster.

AI MVP Validation Checklist

Deepen execution with tactical checkpoints and quality controls.

How To Get First 10 Customers for Your AI Startup

Move from implementation to measurable growth and retention outcomes.

60-Second Summary

- Pick one KPI and one owner before expanding scope.

- Ship improvements weekly with explicit fallback behavior.

- Use the stage-based links above to continue in sequence.

Frequently Asked Questions

What team setup works best for User Feedback Analysis Pipeline?

Use a small cross-functional pod: product owner, operator, and technical implementer with one shared KPI target.

How do we handle edge cases safely?

Define fallback behavior and human escalation before rollout, then monitor exception rates in every review cycle.

How soon can we expect measurable impact?

Most teams see directional movement within 1-2 weeks when instrumentation is live and changes are tied to signal quality and roadmap decisions.